Documentation Index

Fetch the complete documentation index at: https://nixtla.io/docs/llms.txt

Use this file to discover all available pages before exploring further.

What is Temporal Hierarchical Forecasting?

Temporal hierarchical forecasting is a technique that improves prediction accuracy by leveraging the structure of time series data across multiple temporal resolutions such as hourly, daily, weekly, and monthly.

Rather than modeling just one time scale, it generates forecasts at each level of the temporal hierarchy and then reconciles them to ensure consistency (e.g., the sum of hourly forecasts aligns with the daily total).

This approach captures both high-frequency variations and long-term trends, allowing for coherent forecasts across time scales.

It is particularly effective in domains like energy demand, retail sales, and transportation planning, where decisions depend on both granular and aggregated time-based insights.

Tutorial

In this tutorial, we demonstrate how to use TimeGPT for temporal hierarchical forecasting. We will use a dataset that has an hourly frequency, and we create forecasts with TimeGPT for both the hourly and the 2-hourly frequency level. The latter constitutes the timeseries when it is aggregated across 2-hour windows. Subsequently, we can use temporal reconciliation techniques to improve the forecasting performance of TimeGPT.

In this tutorial, we demonstrate how to use TimeGPT for temporal hierarchical forecasting. We will use a dataset that has an hourly frequency, and we create forecasts with TimeGPT for both the hourly and the 2-hourly frequency level. The latter constitutes the timeseries when it is aggregated across 2-hour windows. Subsequently, we can use temporal reconciliation techniques to improve the forecasting performance of TimeGPT.

Step 1: Import and Initialize

Let’s import the NixtlaClient and Initialize it with an API key.

import numpy as np

import pandas as pd

from utilsforecast.evaluation import evaluate

from utilsforecast.plotting import plot_series

from utilsforecast.losses import mae, rmse

from nixtla import NixtlaClient

# Initialize NixtlaClient

nixtla_client = NixtlaClient(

# api_key = 'my_api_key_provided_by_nixtla'

)

Step 2: Load and Prepare Data

First, let’s read and process the dataset.

df = pd.read_csv(

'https://raw.githubusercontent.com/Nixtla/transfer-learning-time-series/main/datasets/electricity-short-with-ex-vars.csv'

)

df['ds'] = pd.to_datetime(df['ds'])

df_sub = df.query('unique_id == "DE"')

df_train = df_sub.query('ds < "2017-12-29"')

df_test = df_sub.query('ds >= "2017-12-29"')

df_train.shape, df_test.shape

plot_series(

df_train[['unique_id', 'ds', 'y']][-200:],

forecasts_df=df_test[['unique_id', 'ds', 'y']].rename(columns={'y': 'test'})

)

Step 3: Temporal Hierarchical Forecasting

Temporal Aggregation

We are interested in generating forecasts for the hourly and 2-hourly

windows. We can generate these forecasts using TimeGPT. After generating

these forecasts, we make use of hierarchical forecasting techniques to

improve the accuracy of each forecast.

We first define the temporal aggregation spec. The spec is a dictionary in

which the keys are the name of the aggregation and the value is the amount

of bottom-level timesteps that should be aggregated in that aggregation.

In this example, we choose a temporal aggregation of a 2-hour period and a

1-hour period (the bottom level).

spec_temporal = { "2-hour-period": 2, "1-hour-period": 1 }

from hierarchicalforecast.utils import aggregate_temporal

Y_train, S_train, tags_train = aggregate_temporal(

df=df_train[['unique_id', 'ds', 'y']], spec=spec_temporal

)

Y_test, S_test, tags_test = aggregate_temporal(

df=df_test[['unique_id', 'ds', 'y']], spec=spec_temporal

)

Y_train contains our training data, for both 1-hour and 2-hour periods.

For example, if we look at the first two timestamps of the training data,

we have a 2-hour period ending at 2017-10-22 01:00, and two 1-hour periods,

the first ending at 2017-10-22 00:00, and the second at 2017-10-22 01:00,

the latter corresponding to when the first 2-hour period ends.

Also, the ground truth value y of the first 2-hour period is 38.13, which

is equal to the sum of the first two 1-hour periods (19.10 + 19.03). This

showcases how the higher frequency 1-hour-period has been aggregated into

the 2-hour-period frequency.

Y_train.query("ds <= '2017-10-22 01:00:00'")

| temporal_id | unique_id | ds | y |

|---|

| 0 | 2-hour-period-1 | DE | 2017-10-22 01:00:00 | 38.13 |

| 816 | 1-hour-period-1 | DE | 2017-10-22 00:00:00 | 19.10 |

| 817 | 1-hour-period-2 | DE | 2017-10-22 01:00:00 | 19.03 |

S_train and S_test detail how the lowest temporal

granularity (hour) can be aggregated into the 2-hour periods. For example,

the first 2-hour period, named 2-hour-period-1, can be constructed by

summing the first two hour-periods, 1-hour-period-1 and 1-hour-period-2,

which we also verified above in our inspection of Y_train.

| temporal_id | 1-hour-period-1 | 1-hour-period-2 | 1-hour-period-3 | 1-hour-period-4 |

|---|

| 0 | 2-hour-period-1 | 1.0 | 1.0 | 0.0 | 0.0 |

| 1 | 2-hour-period-2 | 0.0 | 0.0 | 1.0 | 1.0 |

| 2 | 2-hour-period-3 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 2-hour-period-4 | 0.0 | 0.0 | 0.0 | 0.0 |

| 4 | 2-hour-period-5 | 0.0 | 0.0 | 0.0 | 0.0 |

Computing Base Forecasts with TimeGPT

Now, we need to compute base forecasts for each temporal aggregation. The

following cell computes the base forecasts for each temporal aggregation

in Y_train using TimeGPT.

Note that both frequency and horizon are different for each temporal

aggregation. In this example, the lowest level has a hourly frequency, and a

horizon of 48. The 2-hourly-period aggregation thus has a 2-hourly

frequency with a horizon of 24.

Y_hats = []

id_cols = ["unique_id", "temporal_id", "ds", "y"]

for level, temporal_ids_train in tags_train.items():

Y_level_train = Y_train.query("temporal_id in @temporal_ids_train")

temporal_ids_test = tags_test[level]

Y_level_test = Y_test.query("temporal_id in @temporal_ids_test")

freq_level = pd.infer_freq(Y_level_train["ds"].unique())

horizon_level = Y_level_test["ds"].nunique()

Y_hat_level = nixtla_client.forecast(

df=Y_level_train[["ds", "unique_id", "y"]],

h=horizon_level

)

Y_hat_level = Y_hat_level.merge(Y_level_test, on=["ds", "unique_id"], how="left")

Y_hat_cols = id_cols + [col for col in Y_hat_level.columns if col not in id_cols]

Y_hat_level = Y_hat_level[Y_hat_cols]

Y_hats.append(Y_hat_level)

Y_hat = pd.concat(Y_hats, ignore_index=True)

Y_hat contains all the forecasts but they are not coherent

with each other. For example, consider the forecasts for the first time

period of both frequencies.

| unique_id | temporal_id | ds | y | TimeGPT |

|---|

| 0 | DE | 2-hour-period-1 | 2017-12-29 01:00:00 | 10.45 | 16.949455 |

| 24 | DE | 1-hour-period-1 | 2017-12-29 00:00:00 | 9.73 | -0.241482 |

| 25 | DE | 1-hour-period-2 | 2017-12-29 01:00:00 | 0.72 | -3.456478 |

y for the first 2-hour period is 10.45, and the sum

of the ground truth values for the first two 1-hour periods is (9.73 + 0.72)

= 10.45. Hence, these values are coherent with each other.

However, the forecast for the first 2-hour period is 16.95, but the sum of

the forecasts for the first two 1-hour periods is -3.69. Hence, these

forecasts are clearly not coherent with each other.

We will use reconciliation techniques to make these forecasts better

coherent with each other and improve their accuracy.

Forecast Reconciliation

We can use the HierarchicalReconciliation class to reconcile the forecasts.

In this example we use MinTrace. Note that we have to set temporal=True

in the reconcile function.

The S parameter was renamed to S_df in hierarchicalforecast. Make sure

you are using S_df when calling reconcile.

from hierarchicalforecast.methods import MinTrace

from hierarchicalforecast.core import HierarchicalReconciliation

reconcilers = [MinTrace(method="wls_struct")]

hrec = HierarchicalReconciliation(reconcilers=reconcilers)

Y_rec = hrec.reconcile(Y_hat_df=Y_hat, S_df=S_test, tags=tags_test, temporal=True)

Step 4. Evaluation

The HierarchicalForecast package includes the evaluate function to

evaluate the different hierarchies.

We evaluate the temporally aggregated forecasts across all temporal aggregations.

import hierarchicalforecast.evaluation as hfe

evaluation = hfe.evaluate(

df=Y_rec.drop(columns='unique_id'),

tags=tags_test,

metrics=[mae],

id_col='temporal_id'

)

numeric_cols = evaluation.select_dtypes('number').columns

evaluation[numeric_cols] = evaluation[numeric_cols].map('{:.3}'.format).astype(float)

evaluation

| level | metric | TimeGPT | TimeGPT/MinTrace_method-wls_struct |

|---|

| 0 | 2-hour-period | mae | 25.2 | 12.00 |

| 1 | 1-hour-period | mae | 18.5 | 6.16 |

| 2 | Overall | mae | 20.8 | 8.12 |

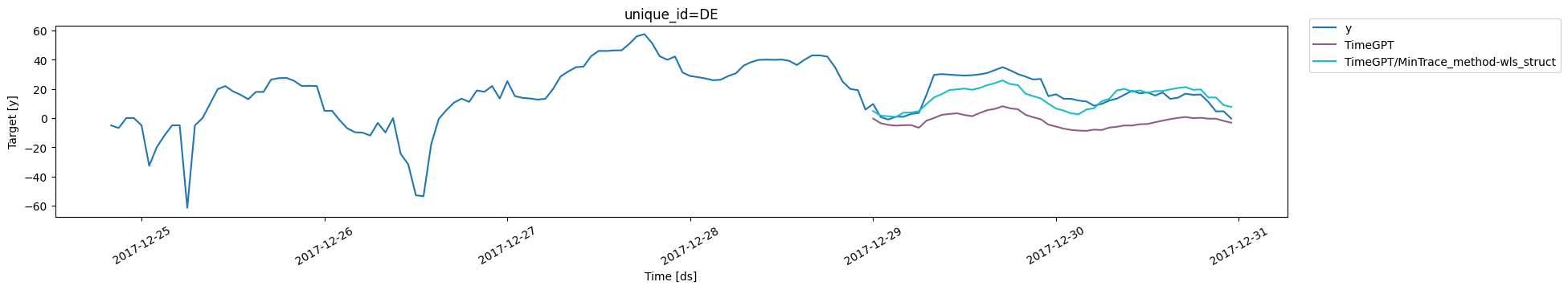

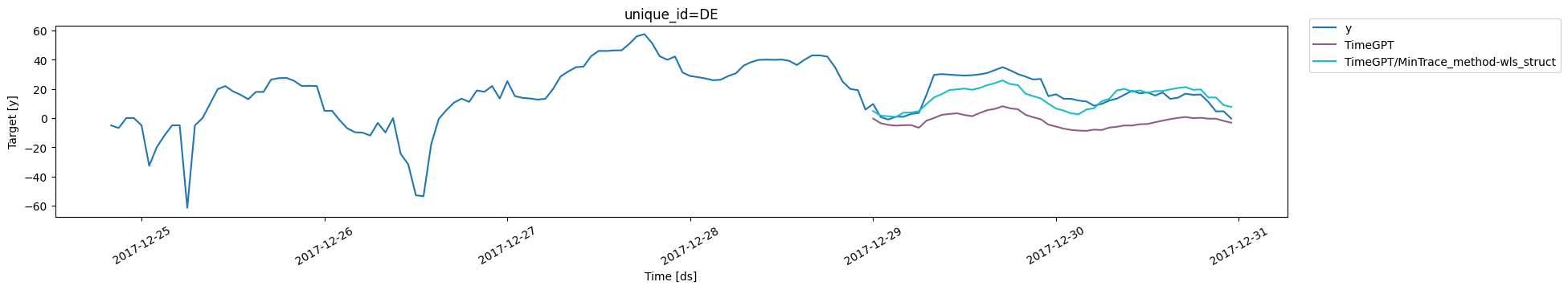

plot_series(

Y_train.query(

"temporal_id in @tags_train['1-hour-period']"

)[["y", "ds", "unique_id"]].iloc[-100:],

forecasts_df=Y_rec.query("temporal_id in @tags_test['1-hour-period']").drop(columns=["temporal_id"])

)

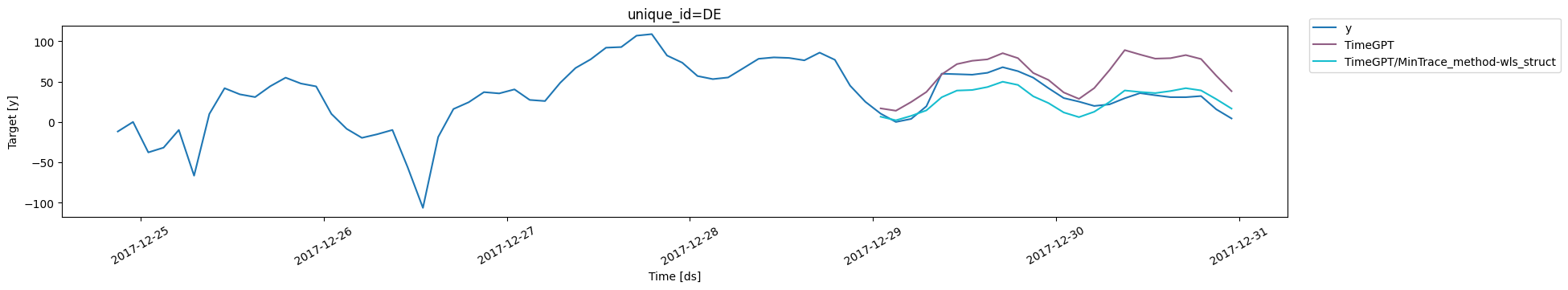

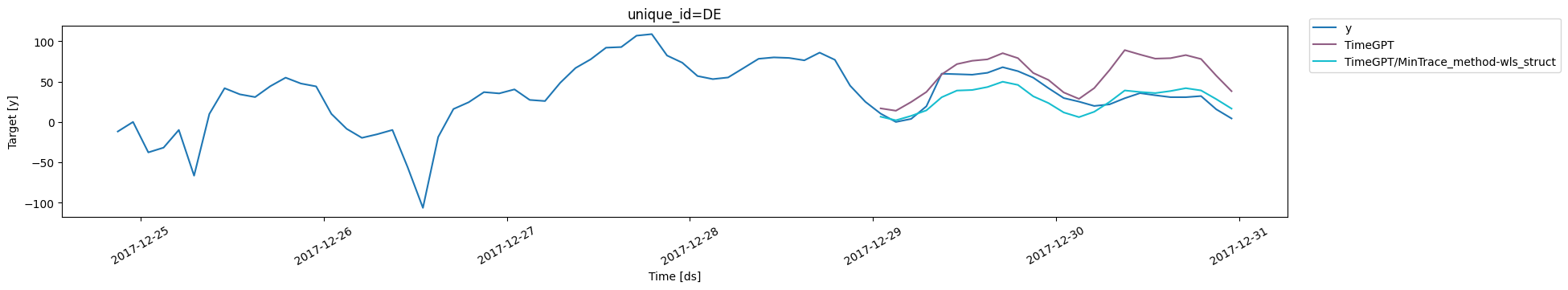

and for the 2-hour period forecasts:

and for the 2-hour period forecasts:

plot_series(

Y_train.query(

"temporal_id in @tags_train['2-hour-period']"

)[["y", "ds", "unique_id"]].iloc[-50:],

forecasts_df=Y_rec.query("temporal_id in @tags_test['2-hour-period']").drop(columns=["temporal_id"])

)

Also, we can now verify that the forecasts are better coherent with each other.

For the first 2-hour period, our forecast after reconciliation is 6.63, and

the sum of the forecasts for the first two 1-hour periods is 1.7 + 4.92 =

6.63. Hence, we now have more accurate and coherent forecasts across frequencies.

Also, we can now verify that the forecasts are better coherent with each other.

For the first 2-hour period, our forecast after reconciliation is 6.63, and

the sum of the forecasts for the first two 1-hour periods is 1.7 + 4.92 =

6.63. Hence, we now have more accurate and coherent forecasts across frequencies.

Y_rec.query(

"temporal_id in ['2-hour-period-1', '1-hour-period-1', '1-hour-period-2']"

)

| unique_id | temporal_id | ds | y | TimeGPT | TimeGPT/MinTrace_method-wls_struct |

|---|

| 0 | DE | 2-hour-period-1 | 2017-12-29 01:00:00 | 10.45 | 16.949455 | 6.625748 |

| 24 | DE | 1-hour-period-1 | 2017-12-29 00:00:00 | 9.73 | -0.241482 | 4.920372 |

| 25 | DE | 1-hour-period-2 | 2017-12-29 01:00:00 | 0.72 | -3.456478 | 1.705376 |

Conclusion

In this tutorial we have shown:

- How to create forecasts for multiple frequencies for the same dataset with TimeGPT

- How to improve the accuracy of these forecasts using temporal reconciliation techniques

Note that even though we created forecasts for two different frequencies, there

is no ‘need’ to use the forecast of the 2-hour-period. One can use this technique

also simply to improve the forecast of the 1-hour-period.